The girl in the passenger seat clearly has a look of “we knew this was going to happen”, too.

The girl in the passenger seat clearly has a look of “we knew this was going to happen”, too.

No worries, I may have just been unclear considering multiple people appear to have downvoted my comment.

That’s what I’m saying. It has anticheat, and it runs on Linux without issue.

I wouldn’t say “any” major games. Helldivers 2 is a notable exception.

Most users of Windows aren’t editing the registry, no matter what problems they encounter.

For power users that do use regedit, I’d argue there’s still a gap between that and using a shell. The registry can be edited entirely with the Windows graphical utility, after all.

That’s certainly true. If I may ask, what point are you trying to make?

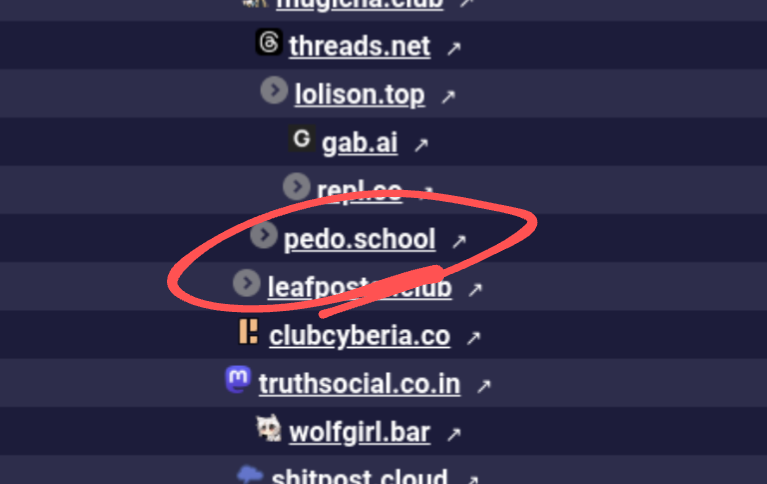

Jesus Christ.

I zoom in and this is the FIRST thing I see. What in the god damned shit?

Seems legit as a concept, though the author is giving weird vibes.

I’m not sure what “globohomo” means but it sounds like a 4chan homophobic term. Additionally the author says they wanted a search engine giving results without “political inclinations”, which reads to me as “reality has a liberal bias and I don’t like that”.

I’ll pass on this for now.

Thank you, I really appreciate that!

Thanks for your input. I received the message that my comment was removed from “automod@lemmy.world”, so that combined with the fact the community’s rule 2 was “no tiktok posts” led me to believe it was a .world action. Your take makes more sense.

That’s helpful, and part of what I’ve found, but I don’t think that’s what I’m looking for.

A comment of mine was removed earlier today and “rule 2” was cited, but I don’t see any relation to the rules in the TOS.

That would require that it be a social media platform, as supposed to a money laundering front.

Oh whoops! 😬 I hope you get the answer you needed!

What on earth is that link at the bottom of your comment? Are you…licensing it?

I’ll explain for you, because there’s a lot of misinformation around.

What is being called AI these days is various companies’ version of what’s called an LLM – Large Language Model. Put simply, an LLM is a very sophisticated piece of software that takes what is asked of it to determine what is statistically the most likely sequence of words to follow as an answer.

This means you can ask a question the way you’d ask a human, and the way it answers will closely mirror how a person would answer (as opposed to stuff like Google Assistant or Siri, where you need to ask a question a specific way to get a decent answer).

Note, however, that at no point did I say that an LLM is accurate. This is the fatal issue that is never included by proponents of this kind of AI. They don’t have any mechanism to retrieve information, or verify the truthfulness of the answers given. You wind up seeing a lot of answers from this kind of AI that is either partially or completely wrong.

My favorite example is the result you get when googling “african countries that start with the letter K”. Someone posted the answer they got from an LLM to a forum online, which said that there is no country, and that became the top google result…despite the fact that Kenya obviously exists and starts with the letter K.

Essentially, LLMs are really fascinating in how well they approximate human speech – but they have absolutely no intelligence behind them. Proponents of this tech as AI either ignore this, or outright lie about it. As a result, a lot of companies have started using this tech to replace their support teams and/or the search functionality of their websites. I’m sure you can imagine the negative effects this has caused.

Jesus. What a shit heel, trying to enlist people during graduation. The fucking audacity.

Rocko?!

It’s honestly unkind to include it in the quote from her. Written down, it is seriously hard to read. But spoken aloud, your brain will filter “filler words” like that out without needing to think too hard about it.